The most important design decision I have made in the last year did not happen during a design phase.

It happened on a Thursday morning, three months after a product had launched, when the on-call engineer mentioned in passing that our support inbox kept getting the same five words typed at us over and over: “how do I turn off…” Almost every time, the user was trying to disable a notification pattern we had, with great care and good intentions, designed to be helpful. The interface offered two ways to silence it; neither of them was the place users were looking. We had built a setting. We had documented it. We had even tested its discoverability during pre-launch research. And we were wrong, visibly and at scale, about where people were going to look for it.

That Thursday morning is, in a quiet way, the thing this post is about. The design that matters — the design that people actually use — is almost never the design that ships on launch day. It is the design that exists four weeks after launch, eight weeks after launch, twelve weeks after launch, each of those weeks incorporating a new piece of evidence the pre-launch process could not have produced.

In the industry I trained in, this rarely gets treated as a design problem. It gets treated as a “product roadmap” problem, a “growth” problem, a “support ticket” problem. Once the designers leave, it becomes whatever problem the people left behind have vocabulary for.

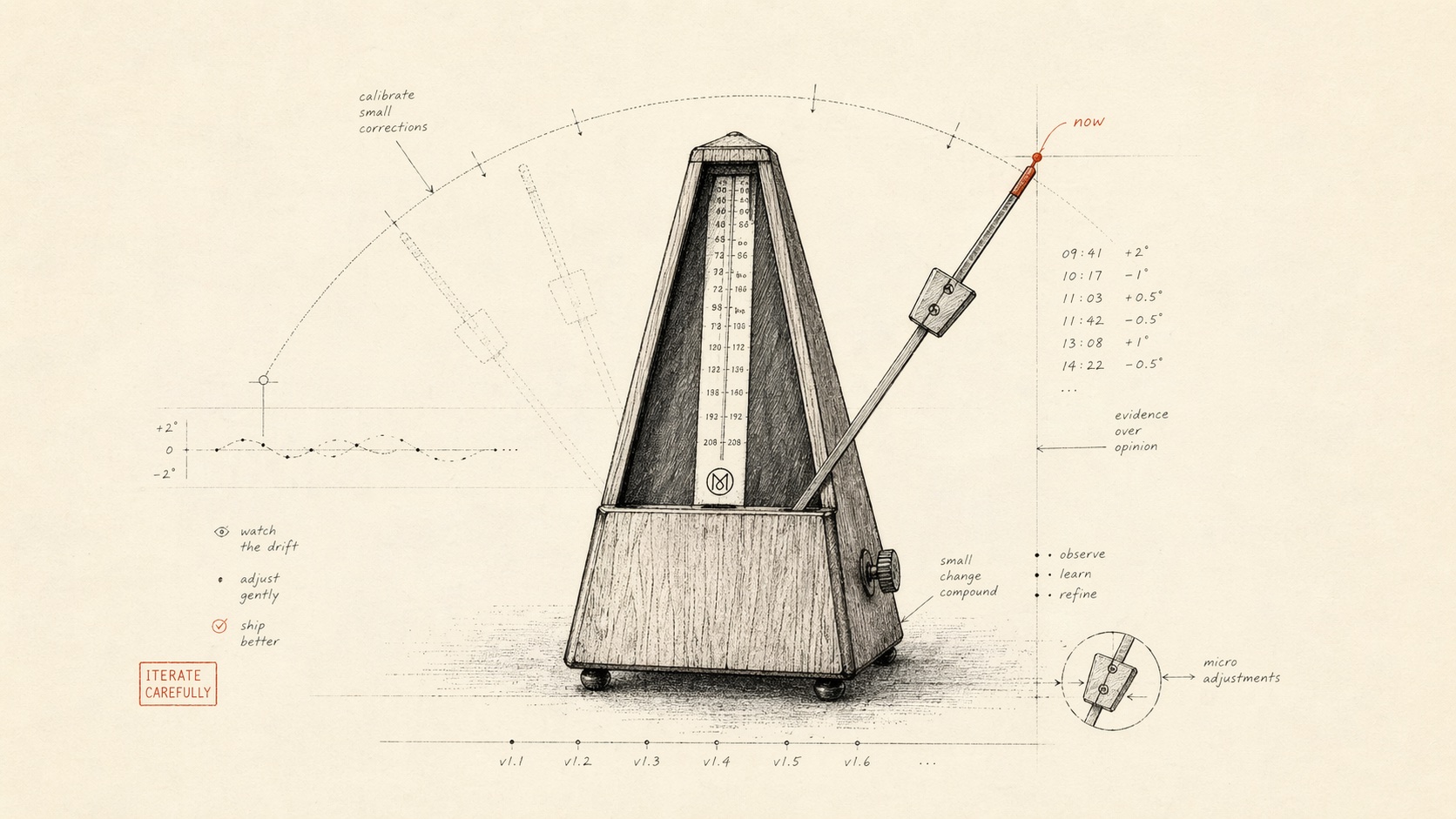

I think this is a mistake. And I think fixing it — treating product design as a continuous tempo rather than a discrete delivery phase — changes what design is actually for.

The inherited model and what it quietly costs

If you came up in the consulting or agency tradition, you know the shape: discovery → concept → wireframes → high-fidelity → handoff → launch. Each step has a deliverable. Each step is signed off. The implicit claim is that by the time handoff happens, the design is done.

I do not know a single designer who believes this at v1. We all ship with a list of compromises we intend to come back to. What almost never happens, in the default industry shape, is that we actually come back. The engagement closes. The design deliverables are finalized. Someone else, typically someone who did not participate in any of the original decisions, inherits both the finished design and the list of quiet compromises that went with it.

The result is what I think of as design’s answer to technical debt — not unfinished features, not missing states, but accumulated friction in places the original design team knew were fragile and the inheriting team has no reason to recognize as fragile. The original designers had the context. They left. The context left with them.

This plays out most visibly in three places, which I will name because I find them useful to watch for:

- Forms that drift off-spec. A form that had a specific validation rhythm at launch quietly becomes eighteen forms, twelve of them copied from the original by someone who did not know which details in the original were load-bearing.

- Empty states that age badly. Copy written for the product’s first three weeks (“Nothing yet — check back after you invite teammates”) still reads six months later, when that state means something else entirely.

- Settings that accrete. Every new feature adds a setting. No one removes a setting. After a year, settings pages are archeological layers, and the information architecture that looked clean at v1 no longer matches what people are trying to do.

None of these are, strictly, broken. They are, all three, quietly failing.

What it means to make design an operating tempo

The alternative I want to describe is not “designers are on-call forever.” It is that the design system — the decisions, the reasoning, the trade-offs — continues to be edited, with intention, as real evidence comes in. Edited as an operational activity, at a specific cadence, by specific people, against specific signals.

In our work, this has a shape I will describe concretely.

1. Design review does not end at launch — it shifts cadence

Before launch, we run design review on the project’s natural rhythm — weekly, typically. After launch, for at least the first twelve weeks, we drop to bi-weekly operating review, which is shorter, tighter, and has a different input: not “here are new screens,” but “here is what the product did in the last two weeks that none of us expected.”

The input format is deliberately mundane: a short document with three columns — what changed in the data, what we heard from support, what we are going to redesign, dim, remove, or clarify in the next two weeks. No hero mocks. No design-thinking theater. This is not where new products get invented; this is where shipped products get corrected.

2. Design changes ship on the operational cadence, not the project cadence

In the default model, “a design change post-launch” triggers a small project — write a spec, get it on the roadmap, slot it, ship it. That cadence is too slow to keep up with the signals.

We instead maintain, past v1, a pre-approved budget of small design changes — typically five to fifteen per bi-weekly cycle — that can ship without a new engagement. Copy corrections, form tweaks, empty-state updates, setting hierarchy shifts, microcopy in error paths. Each one takes an hour or two. Accumulated over twelve weeks, they do more for the product’s usability than any single post-launch redesign I have ever been part of.

3. The design system is treated as a living document with owners

A design system that is frozen at launch becomes an archaeology project within a year. We ship every design system with an operating spec — who owns it, what triggers a change, how changes are recorded, how old decisions are retired.

The practical effect: a year after launch, the design system matches the product as it actually is, not the product as it was proposed. It remains usable by new contributors — internal or external — without requiring them to first excavate why a given decision looks the way it does.

How this looked in two cases

A museum hardware partner — where the post-launch design change was the important one

One of our hardware engagements ships a guide device into museums alongside a companion mobile app. I’ll describe what we learned in the first four weeks of real-floor deployments without naming the partner — the learning is generalizable, and the details are theirs.

The device launched with a companion mobile app whose primary job, at v1, was device pairing and content playback control. We had carefully designed for the “visitor using one device” case — the default in pre-launch research.

Museum floor staff do not use the device that way. They use it in clusters of twenty, quickly, often with visitor groups who speak two or three languages in the same room. The app’s default view, which sat cleanly on “playing content for the current visitor,” was the wrong default for the floor-staff use case. They needed a “device-group status” view that did not exist in the launch design.

If we had run a traditional engagement, this would have become a Phase 2 project — specced, estimated, negotiated, scheduled, delivered the following quarter. Instead, the operating-cadence design review in week three surfaced the pattern, the design team sketched three options on a shared canvas on Friday, and a new top-level view shipped on Wednesday. It was not the right final design; it was a deliberately light version that absorbed the signal and created room to iterate. The “right” design emerged three cycles later, built on data rather than on meetings.

mymemo — where the hard work was removing features, not adding them

Our other product partnership, mymemo, shipped with a richer feature set than, in hindsight, it needed. This is a predictable consequence of building a consumer AI product in 2025: it is cheap to add a capability, and every capability comes with a new control surface. By the end of month two, the app had drifted into a state where the things that made it distinctive were harder to find than the things that were generic.

In the operating-cadence design review, the movements we made were almost entirely subtractive. A feature that was technically working got buried under a disclosure. A setting that mattered to 0.8% of sessions lost its top-level slot. A navigation item that we had loved, pre-launch, for being interesting lost to a navigation item that turned out to be used.

This kind of editing is awkward in the traditional delivery model. Subtracting things inside a closed engagement feels like admitting a mistake; subtracting things inside an operating cadence feels like doing the job. The change in framing matters more than it sounds like it should.

What this shifts

If you are a client thinking about where a design partner fits in your organization, the frame I would offer is: the design work that happens before launch is smaller than the design work that happens after launch. An engagement that ends at handoff is completing, at best, a third of the job. The more ambitious the product, the more pronounced this is.

If you are a designer thinking about where your own work fits, the frame I would offer is: the decisions that turn out to matter are almost always the ones made with real evidence in hand. A career shape that routes you out of every product the moment the evidence arrives is a career shape optimized for producing work you never learn from. I have found the opposite — staying past launch, at an operating tempo — to be the only reliable way to get better at this job.

The design that ships is a hypothesis. The tempo that follows is how it becomes a product.